Using 🤗 datasets for image search

Using the 🤗 datasets to make an image search engine for British Library Book Illustrations

- The dataset: "Digitised Books - Images identified as Embellishments. c. 1510 - c. 1900. JPG"

- Install required packages

- 📸 Loading the images

- Push all the things to the hub!

- Creating embeddings 🕸

- Image search

- Creating a huggingface space? 🤷🏼

- Conclusion

tl;dr it's really easy to use the huggingface datasets library to create an image search application but it might not be suitable for sharing. update an updated version of this post is on the 🤗 blog!

🤗 Datasets is a library for easily accessing and sharing datasets, and evaluation metrics for Natural Language Processing (NLP), computer vision, and audio tasks. source

When datasets was first launched it was more usually associated with text data and nlp. However, datasets has got support for images. In particular there is now a datasets feature type for images. In this blog post I try and play around with this new datatype, in combination with some other nice features of the library to make an image search app.

To start lets take a look at the image feature. We can use the wonderful rich libary to poke around python objects (functions, classes etc.)

from rich import inspect

from datasets.features import features

inspect(features.Image, help=True)

We can see there a few different ways in which we can pass in our images. We'll come back to this in a little while.

A really nice feature of the datasets library (beyond the functionality for processing data, memory mapping etc.) is that you get some nice things for free. One of these is the ability to add a faiss index. faiss is a "library for efficient similarity search and clustering of dense vectors".

The datasets docs show and example of using faiss for text retrieval. What I'm curious about doing is using the faiss index to search for images. This can be super useful for a number of reasons but also comes with some potential issues.

The dataset: "Digitised Books - Images identified as Embellishments. c. 1510 - c. 1900. JPG"

This is a dataset of images which have been pulled from a collection of digitised books from the British Library. These images come from books across a wide time period and from a broad range of domains. These images were extracted using information in the OCR output for each book. As a result it's known which book the images came from but not necessarily anything else about that image i.e. what it is of.

Some attempts to help overcome this have included uploading the images to flickr. This allows people to tag the images or put them into various different categories.

There have also been projects to tag the dataset using machine learning. This work already makes it possible to search by tags but we might want a 'richer' ability to search. For this particular experiment I will work with a subset of the collections which contain "embellishments". This dataset is a bit smaller so will be better for experimenting with. We can get the data from the BL repository: https://doi.org/10.21250/db17

!aria2c -x8 -o dig19cbooks-embellishments.zip "https://bl.iro.bl.uk/downloads/ba1d1d12-b1bd-4a43-9696-7b29b56cdd20?locale=en"

!unzip -q dig19cbooks-embellishments.zip

import sys

!{sys.executable} -m pip install datasets

Now we have the data downloaded we'll try and load it into datasets. There are various ways of doing this. To start with we can grab all of the files we need.

from pathlib import Path

files = list(Path('embellishments/').rglob("*.jpg"))

Since the file path encodes the year of publication for the book the image came from let's create a function to grab that.

def get_parts(f:Path):

_,year,fname = f.parts

return year, fname

from PIL import Image

import io

def load_image(path):

with open(path, 'rb') as f:

im = Image.open(io.BytesIO(f.read()))

im.thumbnail((224,224))

return im

im = load_image(files[0])

im

inspect(im)

You can also directly see this

im.filename

Pillow usually loads images in a lazy way i.e. it only opens them when they are needed. The filepath is used to access the image. We can see the filename attribute is present if we open it from the filepath

im_file = Image.open(files[0])

im_file.filename

The reason I don't want the filename attribute present here is because not only do I want to use datasets to process our images but also store the images. If we pass a Pillow object with the filename attribute datasets will also use this for loading the images. This is often what we'd want but we don't want this here for reasons we'll see shortly.

We can now load our images. What we'll do is is loop through all our images and then load the information for each image into a dictionary.

from collections import defaultdict

data = defaultdict(list)

from tqdm import tqdm

for file in tqdm(files):

try:

#load_image(file)

year, fname = get_parts(file)

data['fname'].append(fname)

data['year'].append(year)

data['path'].append(str(file))

except:

Image.UnidentifiedImageError

pass

We can now load the from_dict method to create a new dataset.

from datasets import Dataset

dataset = Dataset.from_dict(data)

We can look at one example to see what this looks like.

dataset[0]

Loading our images

At the moment our dataset has the filename and full path for each image. However, we want to have an actual image loaded into our dataset. We already have a load_image function. This gets us most of the way there but we might also want to add some ability to deal with image errors. The datasets library has gained increased uspport for handling None types- this includes support for None types for images see pull request 3195.

We'll wrap our load_image function in a try block, catch a Image.UnidentifiedImageError error and return None if we can't load the image.

def try_load_image(filename):

try:

image = load_image(filename)

if isinstance(image, Image.Image):

return image

except Image.UnidentifiedImageError:

return None

%%time

dataset = dataset.map(lambda example: {"img": try_load_image(example['path'])},writer_batch_size=50)

Let's see what this looks like

dataset

We have an image column, let's check the type of all our features

dataset.features

This is looking great already. Since we might have some None types for images let's get rid of these.

dataset = dataset.filter(lambda example: example['img'] is not None)

dataset

You'll see we lost a few rows by doing this filtering. We should now just have images which are successfully loaded.

If we access an example and index into the img column we'll see our image 😃

dataset[10]['img']

Push all the things to the hub!

One of the super awesome things about the huggingface ecosystem is the huggingface hub. We can use the hub to access models and datasets. Often this is used for sharing work with others but it can also be a useful tool for work in progress. The datasets library recently added a push_to_hub method that allows you to push a dataset to the hub with minimal fuss. This can be really helpful by allowing you to pass around a dataset with all the transformers etc. already done.

When I started playing around with this feature I was also keen to see if it could be used as a way of 'bundling' everything together. This is where I noticed that if you push a dataset containing images which have been loaded in from filepaths by pillow the version on the hub won't have the images attached. If you always have the image files in the same place when you work with the dataset then this doesn't matter. If you want to have the images stored in the parquet file(s) associated with the dataset we need to load it without the filename attribute present (there might be another way of ensuring that datasets doesn't rely on the image file being on the file system -- if you of this I'd love to hear about it).

Since we loaded our images this way when we download the dataset from the hub onto a different machine we have the images already there 🤗

For now we'll push the dataset to the hub and keep them private initially.

dataset.push_to_hub('davanstrien/embellishments', private=True)

Switching machines

At this point I've created a dataset and moved it to the huggingface hub. This means it is possible to pickup the work/dataset elsewhere.

In this particular example, having access to a GPU is important. So the next parts of this notebook are run on Colab instead of locally on my laptop.

We'll need to login since the dataset is currently private.

!huggingface-cli login

Once we've done this we can load our dataset

from datasets import load_dataset

dataset = load_dataset("davanstrien/embellishments", use_auth_token=True)

We now have a dataset with a bunch of images in it. To begin creating our image search app we need to create some embeddings for these images. There are various ways in which we can try and do this but one possible way is to use the clip models via the sentence_transformers library. The clip model from OpenAI learns a joint representation for both images and text which is very useful for what we want to do since we want to be able to input text and get back an image. We can download the model using the SentenceTransformer class.

from sentence_transformers import SentenceTransformer, util

model = SentenceTransformer('clip-ViT-B-32')

This model will encode either an image or some text returning an embedding. We can use the map method to encode all our images.

ds_with_embeddings = dataset.map(

lambda example: {'embeddings':model.encode(example['img'],device='cuda')},

batch_size=32)

We can "save" our work by pushing back to the hub

ds_with_embeddings.push_to_hub('davanstrien/embellishments', private=True)

If we were to move to a different machine we could grab our work again by loading it from the hub 😃

from datasets import load_dataset

ds_with_embeddings = load_dataset("davanstrien/embellishments", use_auth_token=True)

We now have a new column which contains the embeddings for our images. We could manually search through these and compare it to some input embedding but datasets has an add_faiss_index method. This uses the faiss library to create an efficient index for searching embeddings. For more background on this library you can watch this youtube video

ds_with_embeddings['train'].add_faiss_index(column='embeddings')

prompt = model.encode("A steam engine")

We can see what this looks like

prompt

We can use another method from the datasets library get_nearest_examples to get images which have an embedding close to our input prompt embedding. We can pass in a number of results we want to get back.

scores, retrieved_examples = ds_with_embeddings['train'].get_nearest_examples('embeddings', prompt,k=9)

We can index into the first example this retrieves:

retrieved_examples['img'][0]

This isn't quite a steam engine but it's also not a completely weird result. We can plot the other results to see what was returned.

import matplotlib.pyplot as plt

plt.figure(figsize=(20, 20))

columns = 3

for i in range(9):

image = retrieved_examples['img'][i]

plt.subplot(9 / columns + 1, columns, i + 1)

plt.imshow(image)

Some of these results look fairly close to our input prompt. We can wrap this in a function so can more easily play around with different prompts

def get_image_from_text(text_prompt, number_to_retrieve=9):

prompt = model.encode(text_prompt)

scores, retrieved_examples = ds_with_embeddings['train'].get_nearest_examples('embeddings', prompt,k=number_to_retrieve)

plt.figure(figsize=(20, 20))

columns = 3

for i in range(9):

image = retrieved_examples['img'][i]

plt.title(text_prompt)

plt.subplot(9 / columns + 1, columns, i + 1)

plt.imshow(image)

get_image_from_text("An illustration of the sun behind a mountain")

Trying a bunch of prompts ✨

Now we have a function for getting a few results we can try a bunch of different prompts:

-

For some of these I'll choose prompts which are a broad 'category' i.e. 'a musical instrument' or 'an animal', others are specific i.e. 'a guitar'.

-

Out of interest I also tried a boolean operator: "An illustration of a cat or a dog".

-

Finally I tried something a little more abstract: "an empty abyss"

prompts = ["A musical instrument", "A guitar", "An animal", "An illustration of a cat or a dog", "an empty abyss"]

for prompt in prompts:

get_image_from_text(prompt)

We can see these results aren't always right but they are usually some reasonable results in there. It already seems like this could be useful for searching for a the semantic content of an image in this dataset. However we might hold off on sharing this as is...

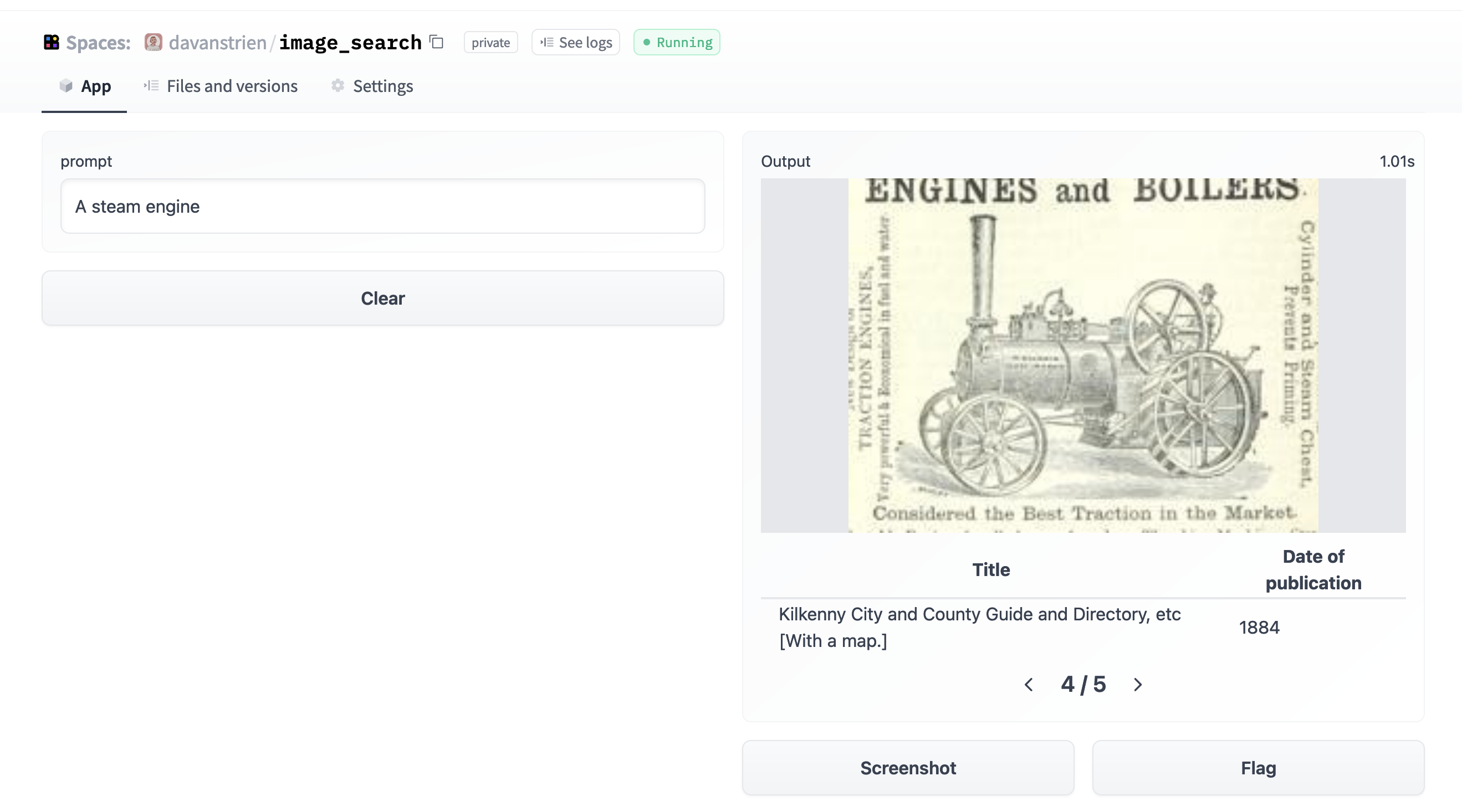

Creating a huggingface space? 🤷🏼

One obvious next step for this kind of project is to create a hugginface spaces demo. This is what I've done for other models

It was a fairly simple process to get a Gradio app setup from the point we got to here. Here is a screenshot of this app.

However, I'm a little bit vary about making this public straightaway. Looking at the model card for the CLIP model we can look at the primary intended uses:

Primary intended uses

We primarily imagine the model will be used by researchers to better understand robustness, generalization, and other capabilities, biases, and constraints of computer vision models. source This is fairly close to what we are interested in here. Particularly we might be interested in how well the model deals with the kinds of images in our dataset (illustrations from mostly 19th century books). The images in our dataset are (probably) fairly different from the training data. The fact that some of the images also contain text might help CLIP since it displays some OCR ability.

However, looking at the out-of-scope use cases in the model card:

Out-of-Scope Use Cases

Any deployed use case of the model - whether commercial or not - is currently out of scope. Non-deployed use cases such as image search in a constrained environment, are also not recommended unless there is thorough in-domain testing of the model with a specific, fixed class taxonomy. This is because our safety assessment demonstrated a high need for task specific testing especially given the variability of CLIP’s performance with different class taxonomies. This makes untested and unconstrained deployment of the model in any use case currently potentially harmful. source suggests that 'deployment' is not a good idea. Whilst the results I got are interesting I haven't played around with the model enough yet (and haven't done anything more systematic to evaluate its performance and biases). Another additional consideration is the target dataset itself. The images are drawn from books covering a variety of subjects and time periods. There are plenty of books which represent colonial attitudes and as a result some of the images included may represent certain groups of people in a negative way. This could potentially be a bad combo with a tool which allows any arbitrary text input to be encoded as a prompt.

There may be ways around this issue but this will require a bit more thought.

Conclusion

Although I don't have a nice demo to show for it I did get to work out a few more details of how datasets handles images. I've already used it to train some classification models and everything seems to be working smoothly. The ability to push images around on the hub will be super useful for many use cases too.

I plan to spend a bit more time thinking about whether there is a better way of sharing a clip powered image search for the BL book images or not...

1. If you aren't familiar with datasets. A feature represents the datatype for different data you can have inside a dataset. For example you my have int32, timestamps and strings. You can read more about how features work in the docs↩